There’s always been a lot of talk about the value of search engine optimization (SEO) for literally any kind of online business you can think of.

Online shops, marketing agencies, software as a service (SaaS) providers – you name it!

But the one industry that could certainly benefit from some more SEO time is that of paid advertising.

More specifically – the vast and distributed network of domains that help every advertiser deliver their message to every local market on the World Wide Web.

The commandments of SEO are often neglected by online ad publishers and affiliate marketers alike, with the importance of online visibility often eclipsed by the constant chase to score the best offers and expand the number of ad slots.

And yet, as our story is about to reveal, SEO is one of the primary pillars of online advertising. And it can either make or break your ad publishing business.

An Overview of Our Research

The ultimate goal of our study was painting a clear overview picture of what makes the online ad publishing business tick, and sharing it with the community.

So here are the questions that we wanted to have answered:

- What are the general trends in online ad publishing?

- Are affiliate marketers, content creators, and website owners squeezing the most out of their online mediums?

- What are the common mistakes of online enthusiasts trying to monetize their online content?

- And, most importantly, are these challenges really that hard to fix?

Well, to put it lightly, we were in for a lot of surprises that we are anxious to share with you right away.

The Key Takeaways of Our Research

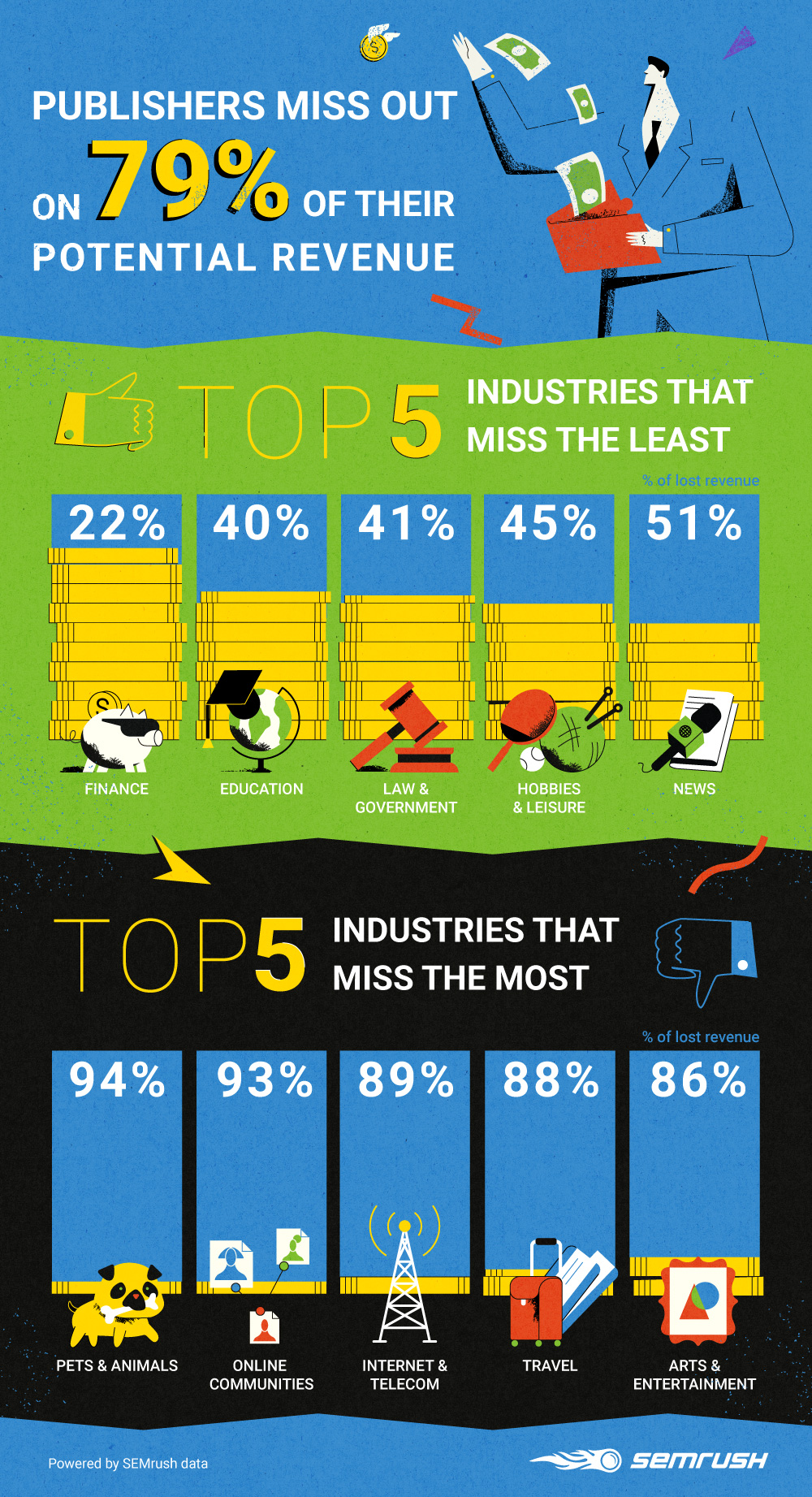

On Average, Publishers Miss Out on 79% of their Revenue

Using three of our popular tools (Traffic Analytics, Site Audit and On-Page SEO Checker), we studied more than 30,000 ad publisher domains across 20 different industries.

As it turns out, your average ad publisher loses the lion’s share of their potential monthly revenue to various design flaws, poor business decisions, and technical issues.

![10 Most Common SEO Mistakes of Online Ad Publishers [SEMrush Research]](https://cdn.searchenginejournal.com/wp-content/uploads/2019/09/data-powered-guide-for-monetization-chart.jpg)

Now let’s see the exact problems with SEO that cause such a major share of revenue to go down the drain.

Top 10 Technical SEO Mistakes (Data Powered by Site Audit)

Failures in the SEO department contribute a lot to the amount of revenue lost by publishers every month. But with 79% of their money going down the drain, a lot of publishers tend to overestimate the complexity of SEO issues plaguing their business.

But the truth is, the top 10 technical SEO mistakes we found with publishers turned out to be rather simple, to say the least:

![10 Most Common SEO Mistakes of Online Ad Publishers [SEMrush Research]](https://cdn.searchenginejournal.com/wp-content/uploads/2019/09/data-powered-guide-for-monetization-chart4.jpg)

If you’re new to SEO and the terms above don’t make much sense to you yet, or if you’d just like a reminder, let’s take a closer look at what each mistake in the list stands for:

1. The 4XX Status Code

This class of error usually means something went wrong on the client side, and the user (or a search engine bot) failed to access a certain webpage.

When it comes to the 4xx’s, your usual suspects are:

- 403 Forbidden: This happens when a user is denied access to a certain resource by the server. Usually this means you have one or several links to restricted webpages publicly available somewhere on your domain.

- 404 Not Found: Arguably, the people’s champion when it comes to website errors, this one is triggered whenever a user tries to access a page that doesn’t exist anymore. Or it exists somewhere else – or it never existed in the first place.

2. Broken Internal Links

This is a more specific case of error 404: a link that sends a user to a certain nonexistent page on your own domain.

3. Pages Don’t Have a Unique Title

Titles help search engines and human users understand what each page of your website is about without grinding through its entire content.

If you don’t supply your webpages with unique titles, it can be complicated to tell the difference between them – both for a live user and a Google crawler.

4. Pages Don’t Have a Unique Meta Description

A meta description provides users with a brief overview of any webpage suggested in a search engine result page, thus playing a major role in whether they’ll actually open it.

So creating a unique description for every page (as long as it makes sense) is sure to increase your organic CTR.

5. Pages Contain Links to HTTP Pages

A website’s security is a big deal for lots of online users and services, including Google.

So links pointing to resources using the not-secure HTTP protocol can have a significant negative impact on your online visibility.

6. Pages Couldn’t Be Crawled

This issue means a search engine’s robot could not process (or crawl) a certain page of your website and index all of its content.

Sadly, without it, your page has no chance of making it to the SERP.

7. Duplicate Content Issues

If you’ve run into this issue, it means your website has substantial blocks of exactly identical or very similar content across multiple webpages.

In most cases, this issue is either caused by accident or some oversight in technical implementation.

8. Slow Page Load Speed

In the age of high-speed low-drag Internet connection, a website or an app that loads in a flash is a given.

Naturally, this makes page load speed an important contributor to user experience in Google’s eyes. That’s why pages that take too long to load can have a tougher time positioning well in the SERPs.

9. Wrong Pages in the Sitemap

This issue is triggered when your sitemap.xml (a file that helps crawlers navigate through your website) provides poor instructions for search engine crawlers (e.g., lists URLs of pages not intended for users to visit).

10. Broken Internal Images

We’ve all been there – an image that failed to load is a massive disappointment to every online visitor, but, most importantly, to Google.

Broken images damage user experience and the overall content integrity of a webpage, making it a good enough reason for Google to decrease your online rankings.

Now, broken links and missing pages are hardly something to write home about. Anyone can fix these issues. The key is spotting them in the first place.

“Little things” like these usually just fly below the radar unless you have the right tools to keep your domain’s SEO health in check and keep track of all the room you have to improve your online visibility. Like, say, the SEMrush SEO Toolkit!

SEO Issues – ‘Popularity’ Breakdown

At this point you might be wondering just how common the aforementioned SEO issues are. So here’s a more detailed breakdown:

Technical SEO Issues – Occurrence Breakdown (Data powered by SEMrush Site Audit):

- 4XX status code – seen on 59% of all researched domains.

- Broken internal links – seen on 55% of all researched domains.

- Pages don’t have a unique title – seen on 46% of all researched domains.

- Pages don’t have a unique meta description – seen on 37% of all researched domains.

- Pages contain links to HTTP pages – seen on 36% of all researched domains.

- Pages couldn’t be crawled – seen on 34% of all researched domains.

- Duplicate content issues – seen on 31% of all researched domains.

- Slow page load speed – seen on 30% of all researched domains.

- Wrong pages in the sitemap – seen on 23% of all researched domains.

- Broken internal images – seen on 20% of all researched domains.

Best Ideas for Improving Your SEO (Data Powered by On Page SEO Checker).

Fixing all the major technical issues alone will give a massive boost to your website’s online visibility, but you can always take your SEO game further.

After all, making it to the top of the SERP is not only about fixing what’s broken, but also capitalizing on what’s already good and making it even better.

So allow us to present the Top 10 most popular SEO ideas that we suggest every publisher should try:

![10 Most Common SEO Mistakes of Online Ad Publishers [SEMrush Research]](https://cdn.searchenginejournal.com/wp-content/uploads/2019/09/data-powered-guide-for-monetization-chart-1.jpg)

- Earn links from more sources – suggested to 99% of domains

- Enrich your page content with related words – suggested to 98% of domains

- Focus on creating more informative content – suggested to 91% of domains

- Use target keywords in

tag – suggested to 89% of domains

- Make your text content more readable – suggested to 85% of domains

- Use target keywords in